One of the best things about practice-based research is that you have to actually do the thing you’re studying. In my case, that meant building real software with real partners, using AI to write the code, and documenting everything that happened along the way. Over the past year, two projects have shaped my understanding of what vibe-coding can and can’t do. Both surprised me, in different directions.

Project one: an AI learning companion for kids

The first project came through my CDT programme’s industry challenge system. Bridewell School wanted an AI chatbot to support 11-to-13-year-olds with special educational needs studying English. The pedagogical twist, proposed by my colleague Oberon Buckingham-West, was brilliant: instead of positioning the AI as an expert that delivers knowledge, the system would pretend to be a co-learner that makes mistakes. Students would improve their own skills by teaching and correcting a deliberately flawed AI peer.

Making an AI that’s wrong in educationally useful ways turns out to be a fascinating design challenge. Large language models really want to be right. Getting them to be wrong in just the right way, wrong enough to invite correction but not so wrong that students lose trust, required careful prompt engineering.

I built the prototype in Google AI Studio, describing features in plain English and watching them materialise. Authentication. Themed AI characters with different personalities. Text-to-speech so students could hear responses. A teacher dashboard for oversight. The whole thing came together across a few intensive sessions.

And here’s the part that genuinely caught me off guard: it worked. Not perfectly, not in a way you’d want to deploy unsupervised, but functionally. I’d written almost no code by hand. The experience was equal parts exhilarating and unsettling. If building functional educational software was this accessible through conversation, what did that mean?

But the project also revealed something important. This was software for children. The stakes were not abstract. Content safety mattered. Data retention mattered. Interaction logging mattered. Who could see what mattered. And none of those things had been adequately addressed by the AI-generated code. The prototype was impressive in a demo. Whether it was safe enough for a real classroom was a different question entirely, one that conversational development hadn’t even tried to answer.

Project two: a full-stack arts platform, with a deliberate constraint

The Bridewell experience left me with a nagging question. I’d built something functional without much manual coding, but I had some technical background. I knew enough to recognise when the AI was heading in the wrong direction. What would happen if I pushed the approach further? Where would it actually break?

The Creative Equity Challenge with Arts Council England gave me the chance to find out. The brief focused on supporting freelance artists and small arts organisations in underserved areas. The research team identified a structural problem: funders prefer supporting consortiums over individuals, but forming consortiums requires networking, trust-building, and administrative capacity that independent artists typically don’t have. The result is a catch-22 where you need collective capacity to get funding, but you need funding to build collective capacity.

I decided to build Artspace, a consortium-builder platform, and I imposed a deliberate constraint on myself: I would build the entire system through conversational prompting. No opening a code editor to fix bugs. No manually typing function definitions. When something broke, I would describe the problem to the AI and ask it to fix it, not fix it myself.

This constraint wasn’t a stunt. It was a research method. If I could build functional software without manual coding, then whatever knowledge I still needed would point toward the things non-technical practitioners need to learn. Every time I hit a wall, I was discovering a boundary that matters.

What went right

Quite a lot, actually. Over the course of the challenge, Artspace grew into a surprisingly capable platform. Users could create accounts and log in. Data persisted in a real database. There were role-based permissions so different people could access different things. Document management. AI-assisted guidance for putting together consortium applications. It reached functional states in days rather than the months a traditional development process would have required.

The speed of prototyping was genuinely striking. Ideas that would normally require extensive planning, specification, and development cycles could be tested quickly. If something didn’t work, I could describe a different approach and have it implemented within the same session. The feedback loop between imagining and testing shrank from weeks to minutes.

I also found that the iterative nature of the process built understanding. Each cycle of prompting, testing, and refinement taught me something about the problem space. I developed intuitions about what the AI would handle well and where it would struggle. This wasn’t just output generation. It was a genuinely collaborative, if imperfect, creative process.

What went sideways

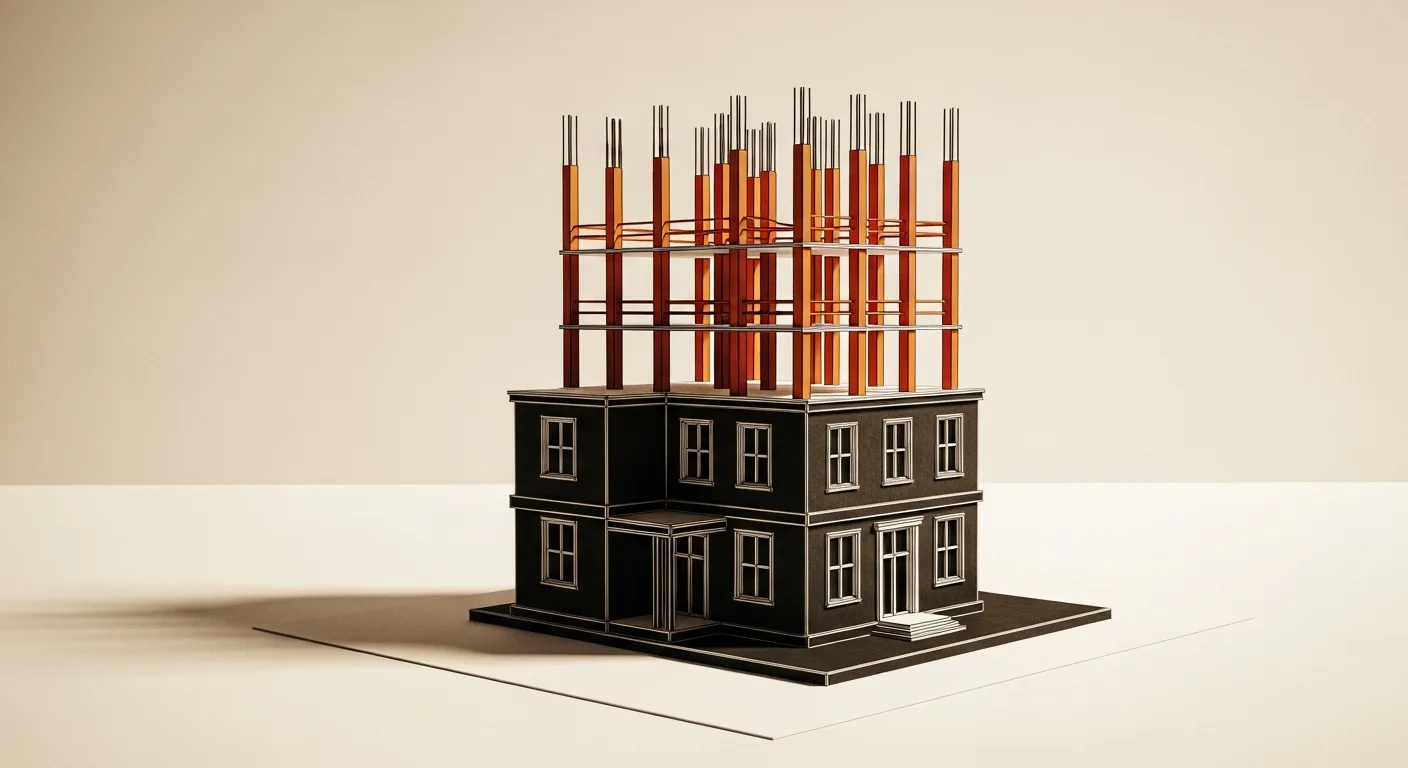

The first thing I generated from pasting in the full project brief was a perfect illustration of what I’ve started calling the scaffold problem. The AI produced a beautiful-looking prototype shell. Professional interface. Convincing layout. Buttons and forms and navigation that looked exactly right. And almost nothing actually worked. It was a building facade with no internal structure. Click through the screens and everything looked great. Try to actually do something and it fell apart.

This taught me that AI excels at producing plausible structures. It’s seen thousands of applications and can reproduce patterns convincingly. But the hard work of software concentrates elsewhere: in data models, in permission structures, in edge cases, in the slow iterative work of making things actually function rather than just look like they function.

I learned to work incrementally. Instead of asking for complete features, I’d establish a small piece of functionality, verify it worked, then build the next piece on that foundation. The conversation shifted from placing an order to something more like collaborative construction.

But deeper problems emerged around infrastructure. The AI could generate code that called database APIs. It could not set up the database. It could write authentication flows. It could not configure the authentication provider. It could produce security rules. It could not verify they matched the actual state of the system. The AI operated within a bounded context, and the infrastructure that made that context possible sat entirely outside its awareness.

For me, with some technical knowledge, this was manageable. I could configure Firebase, set up authentication providers, and tell the AI what was available. But for a non-technical practitioner? They wouldn’t know there was a gap to fill. The AI wouldn’t tell them, because the AI couldn’t see it. And the generated code would simply fail in ways that no amount of re-prompting could fix.

The 80:20 pattern

Across both projects, a consistent pattern emerged that I think of as the 80:20 problem (though the numbers are more impressionistic than precise). AI tools got me roughly 80% of the way to a functional system remarkably fast. That last 20%, the part that makes something safe, reliable, and actually deployable, still required knowledge and effort that conversational development couldn’t shortcut.

That last 20% included things like: making sure private data stayed private, handling what happens when users do unexpected things, configuring security so the right people could access the right things, ensuring the system behaved sensibly when the network dropped out, and managing all the invisible infrastructure that working software depends on.

The 80:20 pattern isn’t a criticism of vibe-coding. That first 80% represents a genuine transformation in what’s possible for non-technical people. But it does mean we need to be clear-eyed about what the tools can and can’t do, and honest about what knowledge still matters.

In the next post, I’ll get into the specifics: the six ways I found vibe-coding breaking down, and what I think non-technical creatives actually need to know to use these tools well.

I’m a PhD researcher at Royal Holloway, University of London, funded through the UKRI Centre for Doctoral Training in AI for Digital Media Inclusion.